For the fourth year running, Coderus set out to inspire East Anglia’s tech community and we are delighted to say that we succeeded!

Sponsored by Tech East and BT, in association with the tech cluster, Innovation Martlesham, Coderus invited delegates to immerse themselves in exciting tech developments before watching the annual Google I/O Developer Conference, streamed live from Mountain View, California.

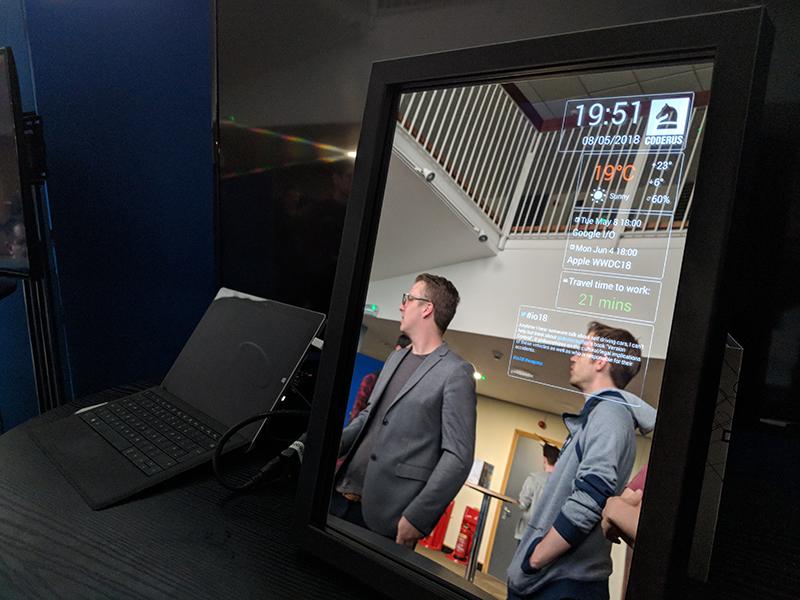

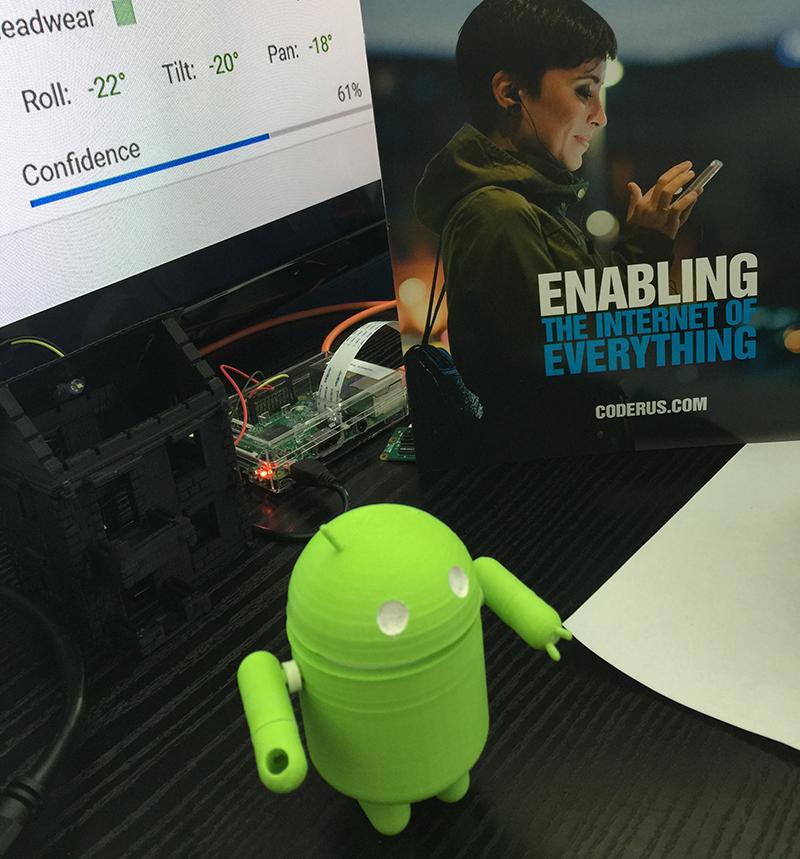

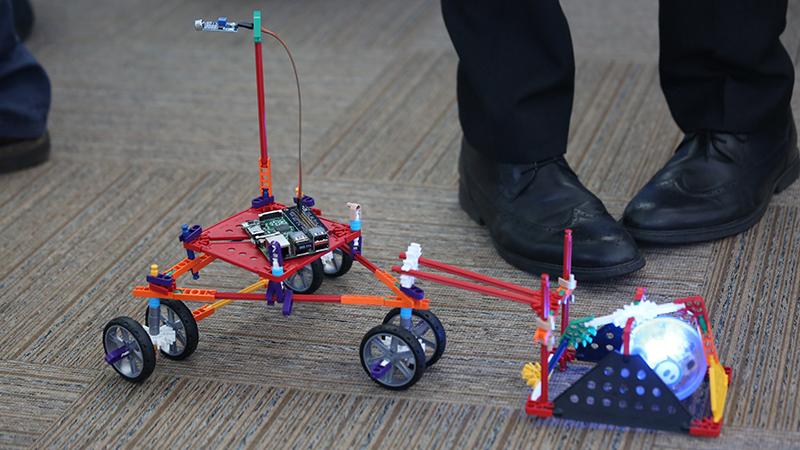

Doors opened at 16:30 when Coderus developers provided enthusiastic demonstrations of embedded technology to the audience. “These demos show just a few ways that we’re able to provide high quality software, as well as our ability to work with a wide range of hardware and embedded devices,” said Mark Thomas, CEO for Coderus.

Embedded tech in action: this Sphero, programmed by students at the Royal Hospital School, Holbrook, makes its way around the school and measures noise levels in different areas.

Also demonstrating was University of Suffolk’s Chris Janes, course leader for Computer Games Programming. He was accompanied by course graduate Tom Andrews who showcased his AR (Augmented Reality) app, which uses gamification techniques to help children correctly administer medication via their asthma inhalers.

Coderus is passionate about helping even the youngest school children gain access to tech and is an active supporter of charitable foundation, the Creative Computing Club. Its founder, Matthew Applegate, brought nine children from around the Ipswich area to the event to inspire them to turn their learning into a career.

Matthew said: “There is still a preconception among young people that they need to go to London or Silicon Valley to get a job in tech. What’s so great about this Coderus event is that it shows these young people the kinds of jobs that they can get into, right here on their doorstep.”

It’s a theme echoed by event sponsor, Morag McInnes, Economic Development Officer for the East Suffolk Council, whose mission is to engage more students at both GCSE and A Level into tech. Morag said: “The Coderus Google I/O event is a real eye-opener. It’s just great to see so many young people here.”

Business Partners and Innovation Martlesham

There was also a strong business presence at the event with representatives from Biddable Solutions, Matt Porter Web Design and Ambient Light also exhibiting.

As a Google partner, we are naturally very interested to see what new developments can bring to the table in terms of supporting our customers and growing their online revenues.

Panellist James Giles, of PPC solutions provider, Biddable Solutions

All three firms are members of the Innovation Martlesham tech cluster, headed by Nicky Daniels. Nicky – also a panellist prior to the opening of the live stream – said:

It was wonderful to see so many enthusiastic and inspired faces attend the Google I/O yesterday. Innovation Martlesham is dedicated to providing support to growing companies, and we hope that events like this will encourage the next generation to join established companies like Coderus here in the tech cluster as we collaborate and innovate together.

Nicky Daniels, Panellist

Coderus is an innovative and leading-edge software development company whom we are very proud to have as part of our tech cluster here at Adastral Park. It has been a pleasure to see them grow, and we were delighted to be able to assist with their running of the Google I/O live stream event. I look forward to seeing more such events and opportunities realised at the Park.

Lisa Perkins, Director of Research and Innovation for BT

Like the look of the Google I/O Extended event? Make sure to join Coderus for the Apple Worldwide Developers Conference on 4th June. Hosted at the Ipswich Waterfront Innovation Centre, the Apple WWDC event will feature showcase demos, a live stream of the keynote speech, and of course, cupcakes.

Header image (l-r): Panellists James Giles (Biddable Solutions), Nicky Daniels (Innovation Martlesham), Mark Thomas (Coderus), Mohamed Abdel-Maguid (University of Suffolk).